- Estado

Trending Tags

- Municipios

- México y el Mundo

- Política

- Opinión

Trending Tags

- Más…

Trending Tags

- Estado

Trending Tags

- Municipios

- México y el Mundo

- Política

- Opinión

Trending Tags

- Más…

Trending Tags

MINUTO A MINUTO

NOTICIAS DEL ESTADO

Cae el hermano de “El Mencho”, en Jalisco

Abraham Oseguera Cervantes, alias “Don Rodo”, hermano de Nemesio Oseguera “El Mencho” fue detenido la madrugada de este domingo en...

Leer más..POLÍTICA

SEGURIDAD

Cae el hermano de “El Mencho”, en Jalisco

Abraham Oseguera Cervantes, alias “Don Rodo”, hermano de Nemesio Oseguera “El Mencho” fue detenido la madrugada de este domingo en la localidad Autlán de Navarro Jalisco. La detención se efectuó...

Leer más..LA NOTA DEL DÍA

¡Otra vez! Fresnillo y Zacatecas en los primeros lugares de ciudades con mayor percepción de inseguridad

Fresnillo y Zacatecas siguen siendo de las ciudades más inseguras del país. Frenillo es la ciudad con mayor percepción de inseguridad en México, mientras que Zacatecas es la tercera, según informó este jueves el Instituto Nacional de Estadística...

Leer más..EDUCACIÓN

Maestros estatales demandan aumento salarial y toman las instalaciones de la SEC

Esta mañana docentes de preparatorias estatales tomaron la Secretaría de Educación del gobierno del estado, en demanda de certeza laboral, salarios dignos y otorgamiento de plazas a maestros con más antigüedad. José Antonio Villa ,docente...

Leer más..TINTA INDELEBLE

Código político: Fresnillo, capital del secuestro

Por Juan Gómez Con una población de aproximadamente 240 mil habitantes y ubicada en el centro norte del pais, Fresnillo,...

No existe democracia donde hay miedo

A 46 días de que se lleve a cabo la elección más grande de la historia de México, en el...

ENTRESEMANA/ El pobre exministro del presidente

Por MOISÉS SÁNCHEZ LIMÓN O lo que es lo mismo: “quieren bajarme del barco a como dé lugar”, según se...

MÉXICO Y EL MUNDO

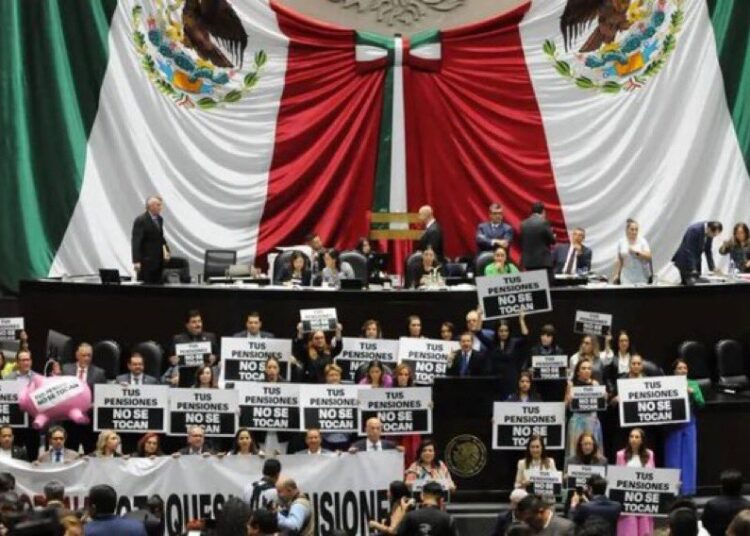

Se aprueba en lo general, la reforma por la que se crea el sistema de Pensiones para el Bienestar

Este lunes 22 de abril fue aprobado en el pleno de la Cámara de Diputados, la reforma por la que...

Retén de encapuchados que se acercó a Sheinbaum “fue un montaje”, asegura AMLO

El presidente Andrés Manuel López Obrador aseguró que el retén que se acercó a Claudia Sheinbaum en Chiapas se trató...

El Tiempo

Las populares de la semana

-

Código político: Fresnillo, capital del secuestro

0 Interacciones -

Cae el hermano de “El Mencho”, en Jalisco

0 Interacciones -

Liberan a 4 jóvenes secuestrados; una era mujer de 18 años

0 Interacciones -

Debe prevalecer la unidad, si se quiere consolidar el triunfo, dice Saúl Monreal

0 Interacciones -

Amalia García pide el respaldo electoral, para seguir trabajando con dignidad por los zacatecanos

0 Interacciones

Pórtico en Spotify

Copyright © 2021 Pórtico Mx

ORgullosamente un diseño y desarrollo de Omar Reyes